The color space transformation can help address the challenges connected to illumination or lighting in the images. Geometric transformations work well when positional biases are present in the images such as the dataset used for facial recognition. Some of common image transformations applied for data augmentation

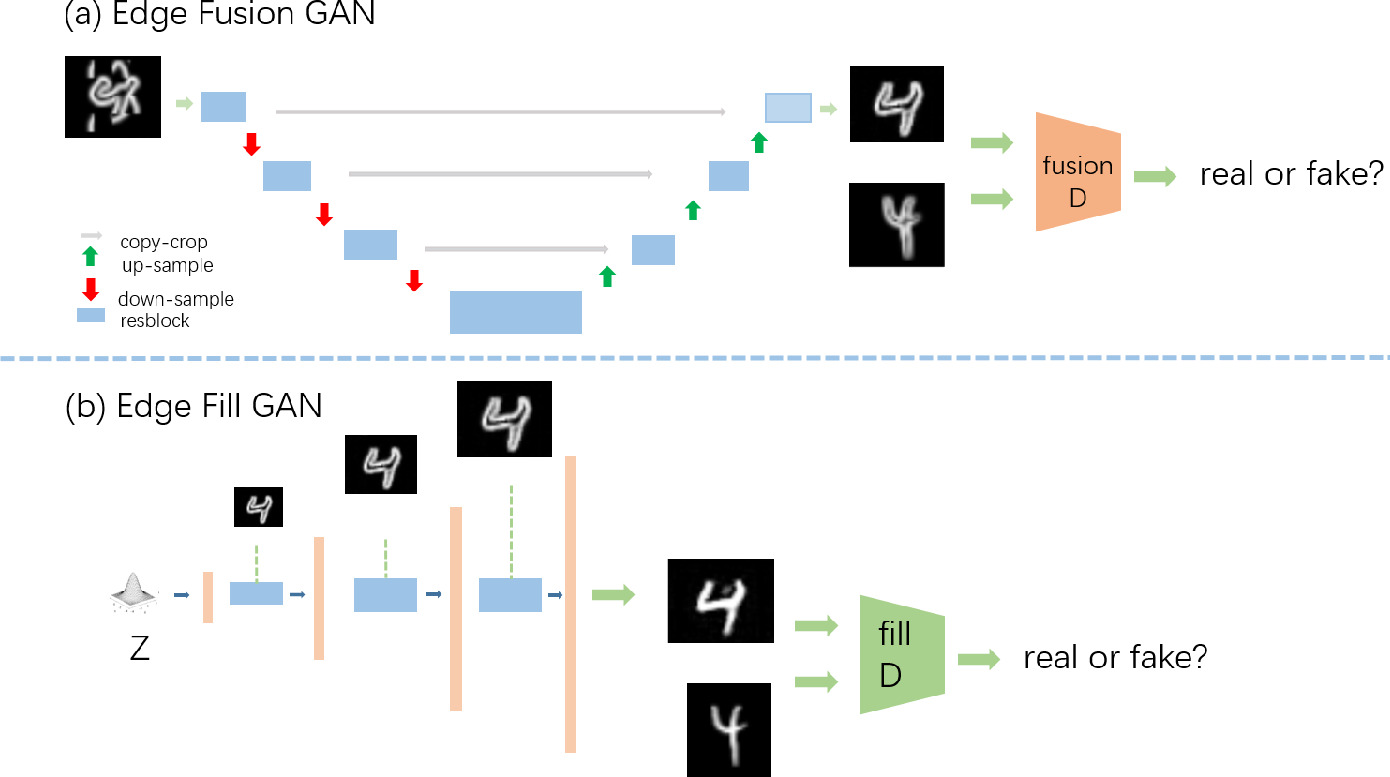

#SALIENT EDGE MAP IN KERAS DATA AUGMENTATION CODE#

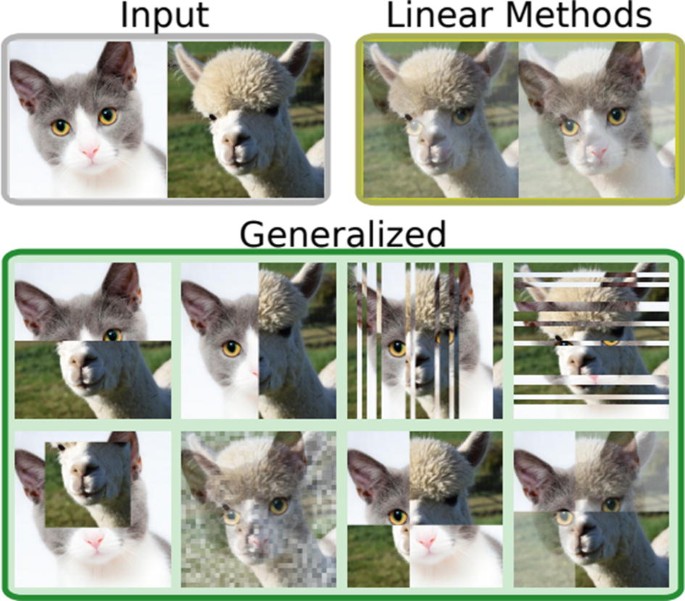

The python code used for applying the transformations is shown in appendix-1.įigure 1. Shows the original image and the images after applying some of these transformations.

Some of the simple transformations applied to the image are geometric transformations such as Flipping, Rotation, Translation, Cropping, Scaling, and color space transformations such as color casting, Varying brightness, and noise injection. Data augmentation can be effectively used to train the DL models in such applications. The augmentation techniques for images and text type data are discussed separately in the following sections.Īlso Read: Image Recognition using Python Image Augmentation for Computer Vision ApplicationsĪmongst the popular deep learning applications, computer vision tasks such as image classification, object detection, and segmentation have been highly successful. These techniques are generally used to address the class imbalance problem in classification tasks.įor unstructured data such as images and text, the augmentation techniques vary from simple transformations to neural network generated data, based on the complexity of the application. To augment plain numerical data, techniques such as SMOTE or SMOTE NC are popular. The augmentation techniques used in deep learning applications depends on the type of the data. The answer to the questions is an assured and cautious ‘yes’: assured since several case studies indicate that the performance improves and cautious since different augmentation techniques used affect the scale of improvement differently.

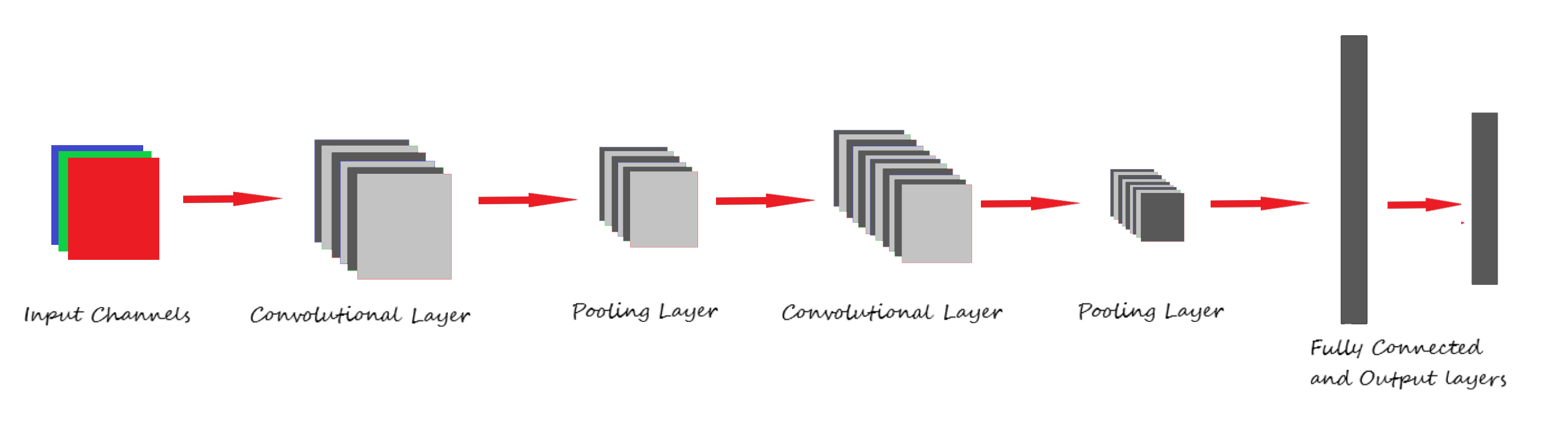

model performance for different applications with and without data augmentation Application The questions that come to the mind are Does data augmentation work? Do we really get better performance from the models when we use augmented data for training? Table 1 shows a few case studies indicating the effect of data augmentation on model performance for different applications. Besides these two, augmented data can also be used to address the class imbalance problem in classification tasks. This approach of synthesizing new data from the available data is referred to as ‘Data Augmentation’.ĭata augmentation can be used to address both the requirements, the diversity of the training data, and the amount of data. Though transfer learning techniques could be used to great effect, the challenges involved in making a pre-trained model to work for specific tasks are tough.Īnother way to deal with the problem of limited data is to apply different transformations on the available data to synthesize new data. Oftentimes, when working on specific complex tasks such as classifying a weed from a crop, or identifying the novelty of a patient, it is very hard to get large amounts of data required to train the models. The number of parameters is proportional to the complexity of the task. In simple terms, the amount of data required is proportional to the number of learnable parameters in the model. They need a large amount of data to learn the values for a large number of parameters during the training phase. These deep learning models trained to perform complex tasks such as object detection or language translation with high accuracy have a large number of tunable parameters. In Natural Language Processing models such as BERT (340M), it is of the order of a hundred million.

The number of parameters in the State-of-the-art Computer Vision models such as RESNET (60M) and Inception-V3 (24M) is of the order of ten million. As the number of hidden neurons increases, the number of trainable parameters also increases. The relation between deep learning models and amount of training data required is analogous to that of the relation between rocket engines (deep learning models) and the huge amount of fuel (huge amounts of data) required for the rocket to complete its mission (success of the deep learning model).ĭL models trained to achieve high performance on complex tasks generally have a large number of hidden neurons. The prediction accuracy of the Supervised Deep Learning models is largely reliant on the amount and the diversity of data available during training. LinkedIn Profile: Introduction to Data Augmentation